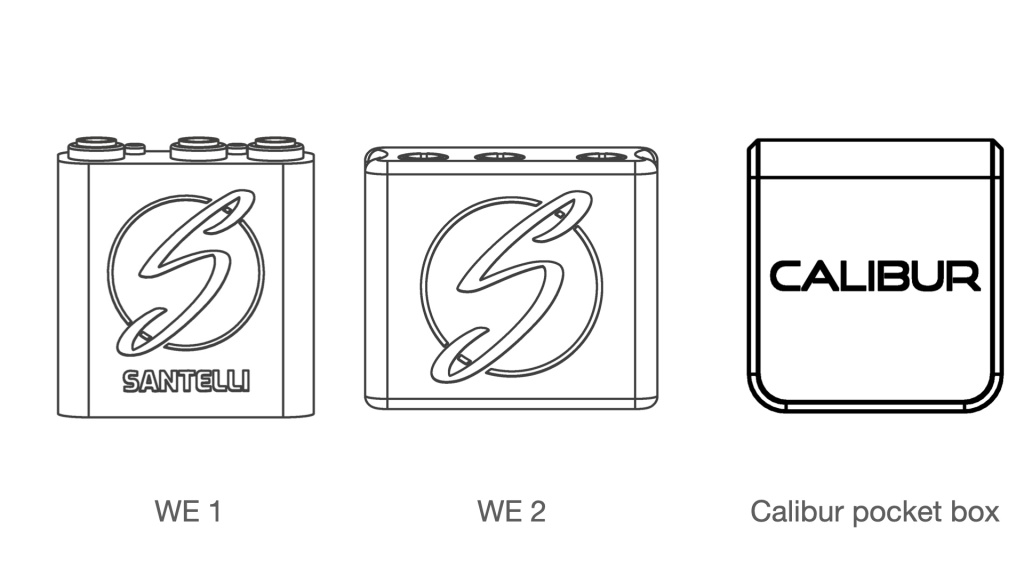

We nicknamed the project “wire eater” among ourselves so I will refer to the devices asWE versions. The plan was set in motion: ship WE-1, incorporate feedback and develop WE-2 within 3 months. The goals for WE-2: make the app cross-platform (Android and iOS), get bellguard-grounding-accuracy to 90-95% on épée and deliver over 100 devices for the clubs to test. In other words a broad beta test for WE-2. We planned the testing to take place in November.

The sprint started by mid-August and the first clubs buying WE-1 planned to restart fencing in September. We agreed to deliver for the reopening. For the first few weeks development and production went simultaneously, but delivering a product for actual customers was very exciting. We finished just in time:

Some pictures from a training session @ PSE. The kids had fun, and we gathered valuable insights.

Néhány kép a PSE edzéséről. A gyerekek jól érezték magukat mi pedig sűrűn jegyzeteltünk.

Posted by Calibur Fencing on Thursday, 1 October 2020

The kids intuitively started to use our products really enjoyed themselves

In the meantime we made a detailed roadmap for WE-2 with the 3 goals in mind as above. Let’s get through them one by one.

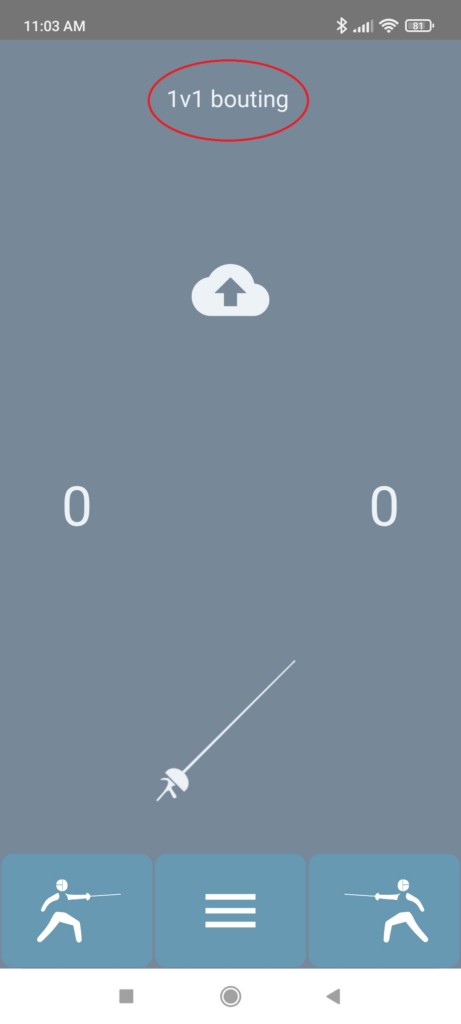

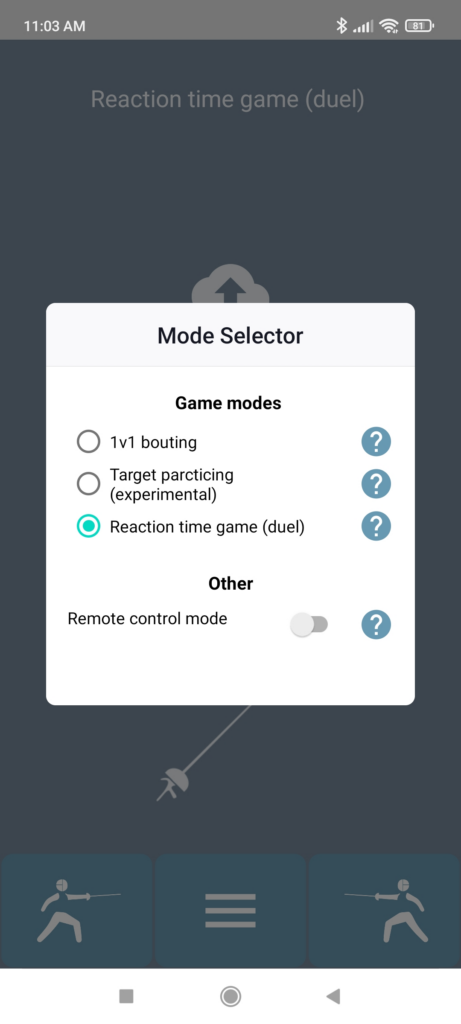

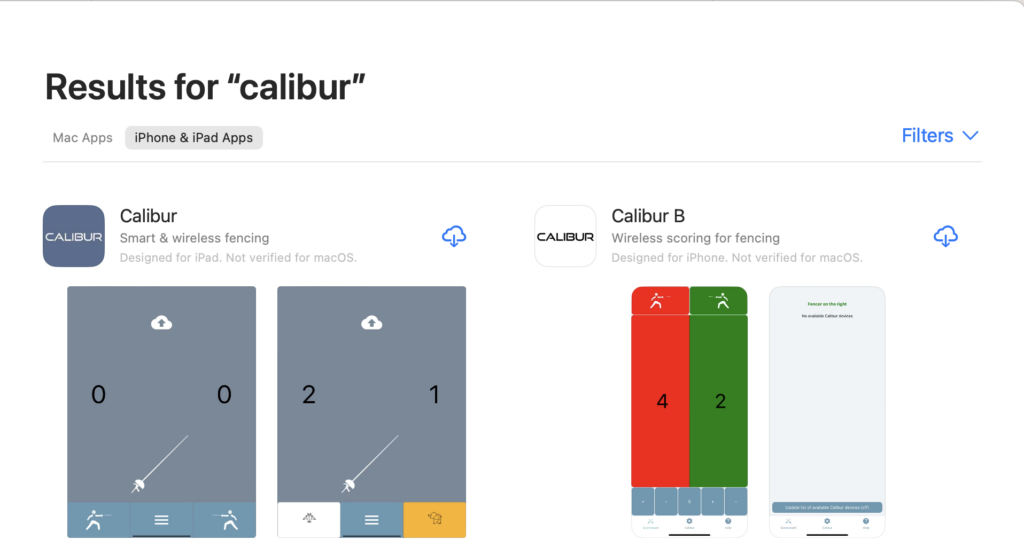

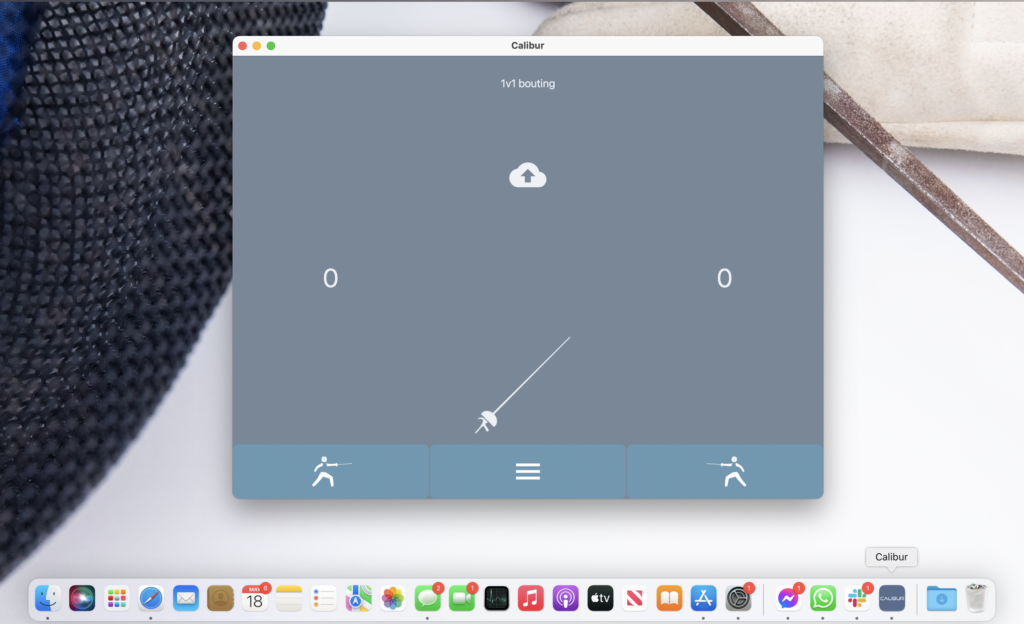

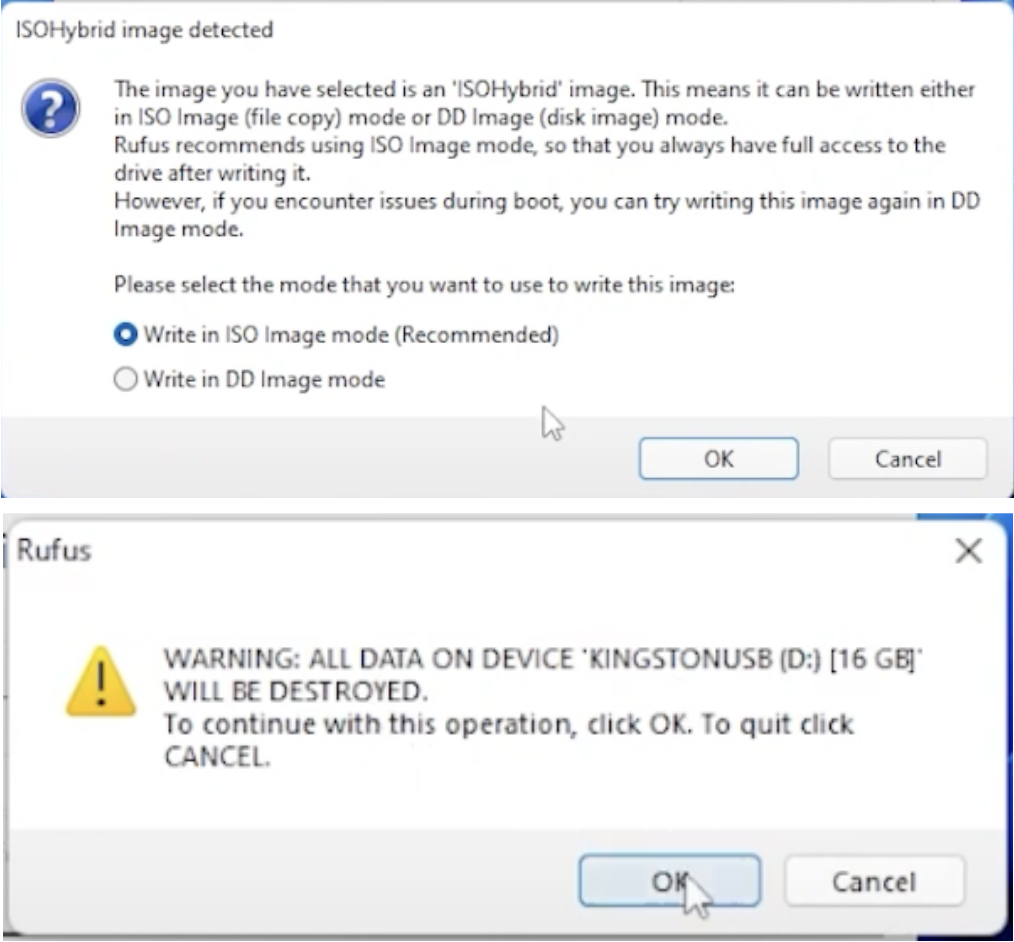

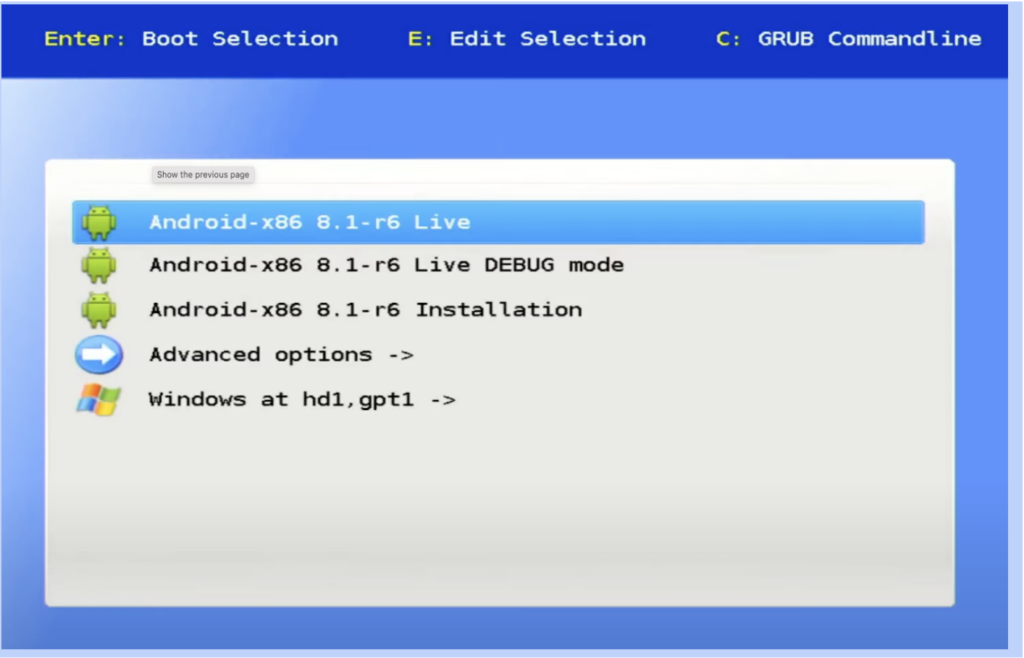

Making the app cross platform

It shouldn’t take particularly precise planning. We take what we have for Android and replicate it for iPhones, right? Well, Apple strictly controls everything and why wouldn’t that be true for wireless devices. If we want to connect something to iPhones we need to use a wireless chip approved by Apple. If you had to guess whether we used one like that or not, where would you put your money, and why on not? WE-1 only supports épée and does not have any grounding capacity, but it has a very stable and fast connection with the phones. That part was fine tuned already. Changing the chip means to throw all that away, and restart.

Getting accuracy over 95%

Bellguard grounding should work 9+ times out of 10 in test environment. Our model is based on that a larger data pool is needed to operate. How would we get that? Obviously it’s not an option to gather that at the clubs every time we change something. So a lot of nights went like this:

999 green bottles on the wall, if one green bottle should accidentally fall, 998 green bottles on the wall…

Both of the goals turned out to be an uphill battle especially in the given timeframe. All the new wireless chips turned out to be either too slow or dropped connection too easily with the phones. Every time we tested out something in the lab and took it to a real training session it completely failed. Special thanks to OSC and their fencers for letting us do our it-worked-yesterday-I-swear show twice a week. The connection drop proved to be a very stubborn issue, basically disrupting every other test we tried to run.

[supsystic-gallery id=6]

OSC helped a lot, sharing their space to test

Producing 100 pieces

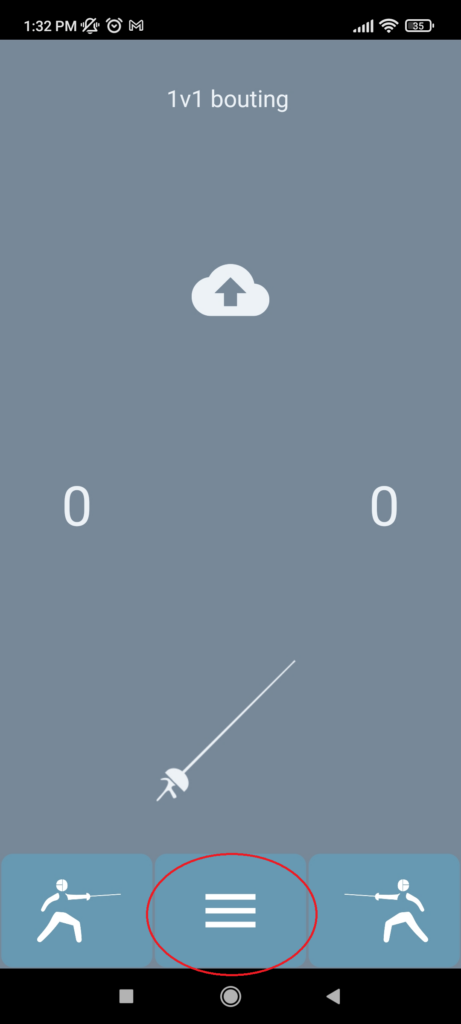

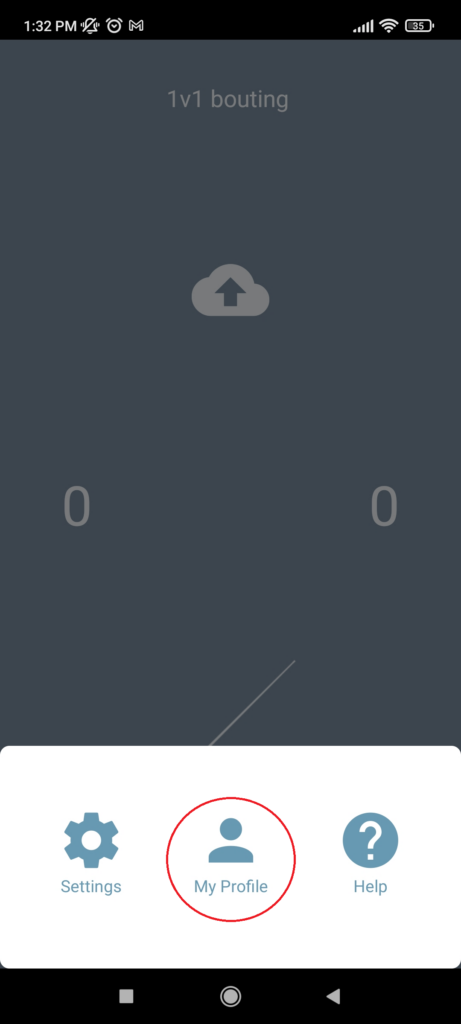

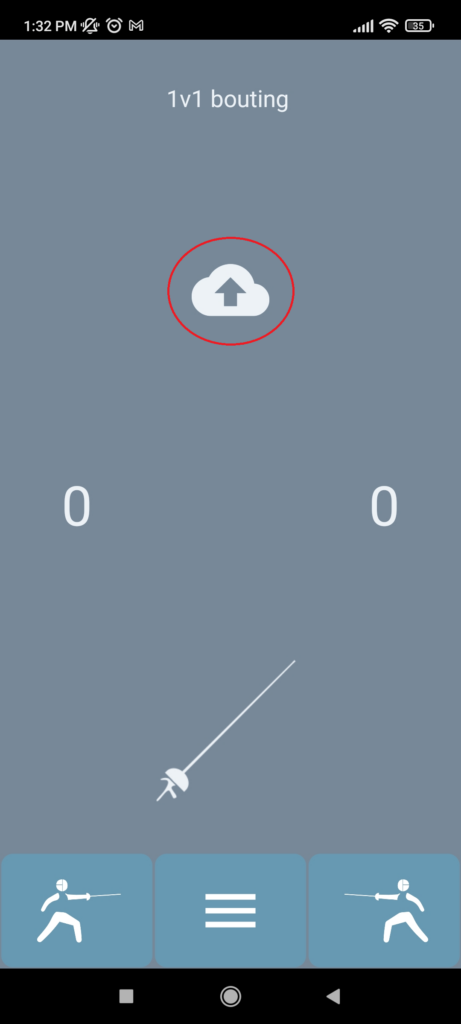

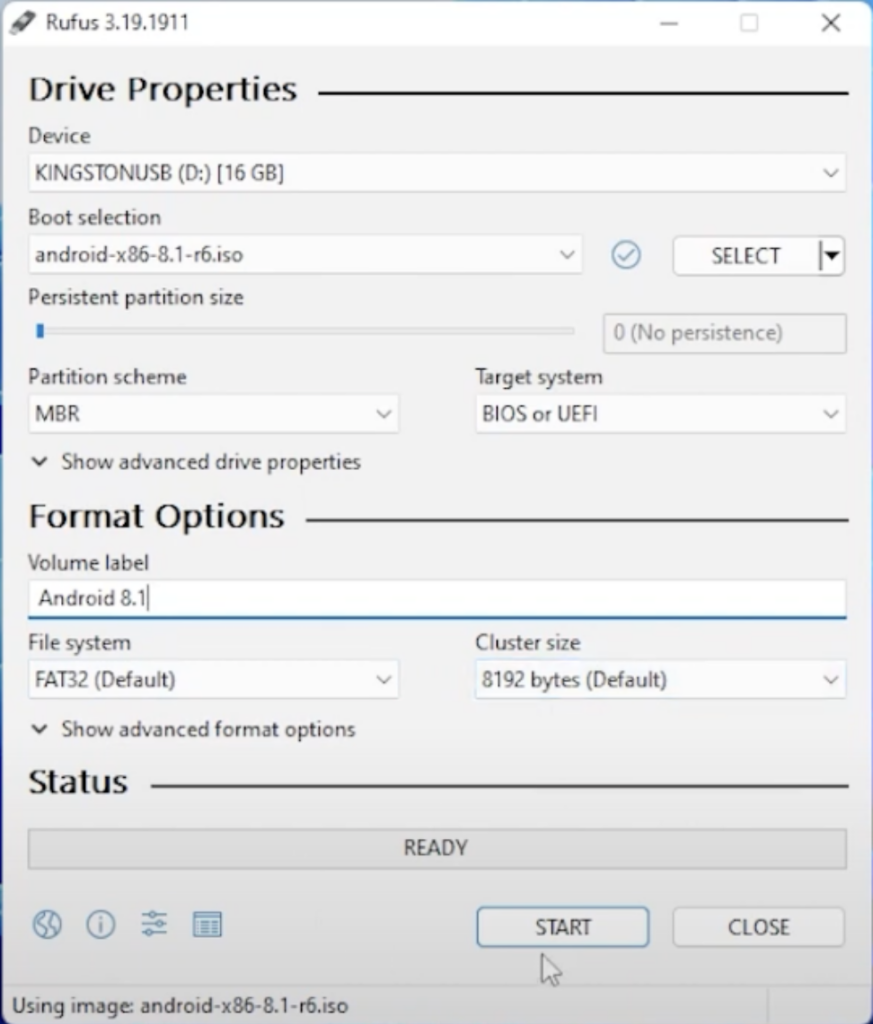

We were approaching the end of October. The clubs who signed up for testing were all set, waiting for their devices, we just finished the new enclosure design and for the 1st time the application was approved on both platforms.

[supsystic-gallery id=8]

It's much more convenient to connect the yellow penguin than a QR code

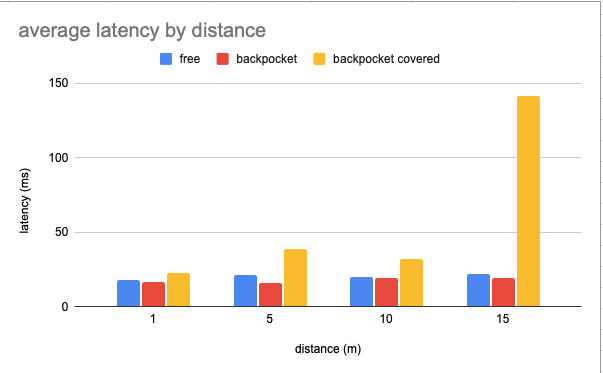

But the connection issues still stayed with us. We had only a week left to finish and we put our bets on a last resort solution in changing the antenna design. We urgently needed a plan B and getting the devices out of the pockets made the connection slightly better.

[supsystic-gallery id=7]

Desperate times call desperate measures

I shouldn’t get into details about the things we tried on the last weekend in a rush to find a way to attach them to the body. The new antenna design, though far from perfect proved to be above the threshold and we decided to give it a go.

We’ve made everything ready and after an intensely laborious week with 3 hours of sleep on average we tested, packed and labelled all the packages ready for shipping. And the épée beta started.

Final touches before sending out beta test packages. (Nov.11.)

Utolsó simítások mielőtt kiküldtük a beta teszt csomagokat.

Posted by Calibur Fencing on Sunday, 22 November 2020